Publications

Publications

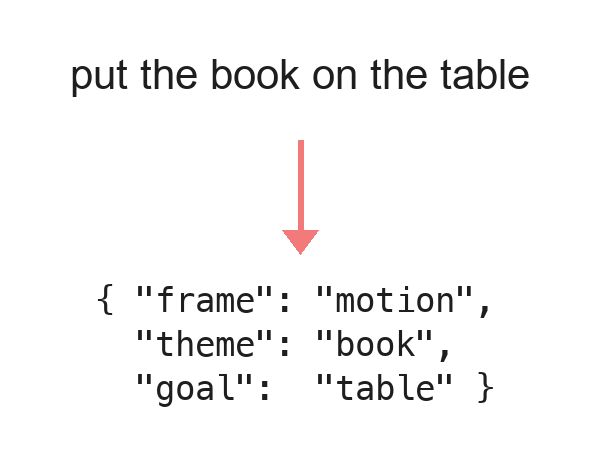

Precise Robot Command Understanding Using Grammar-Constrained Large Language Models

ASME MSEC · 2026

Large language models understand natural language but often output commands that cannot execute on a robot. We combine a fine-tuned LLM for contextual parsing with a grammar-based canonicalizer that forces outputs into a validated JSON action frame, and a feedback loop re-prompts the LLM whenever a grammar parser rejects a command. On the HuRIC human-robot interaction corpus, the hybrid model produces more valid commands than either a fine-tuned LLM or a grammar-based NLU baseline.

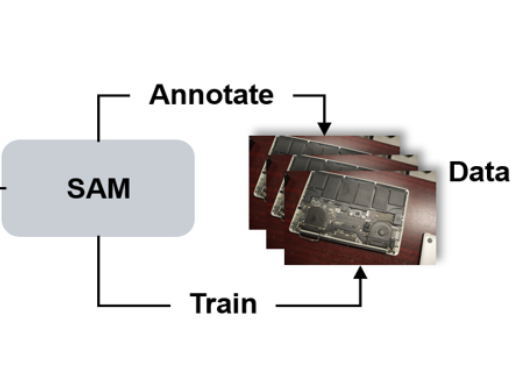

Evaluating Large and Lightweight Vision Models for Irregular Component Segmentation in E-Waste Disassembly

ASME MSEC · 2026

Foundation vision models are assumed to generalize across tasks, yet e-waste segmentation poses irregular shapes and dense packing that challenge this assumption. On 1,456 annotated RGB images of laptop components including logic boards, heat sinks, and fans, we benchmark SAM2 against the much smaller YOLOv8. YOLOv8 reaches mAP50 of 98.8% with crisper boundaries, while SAM2 falls to 8.4% despite its scale, which suggests task-specific optimization still outweighs general pre-training in industrial robotic settings.

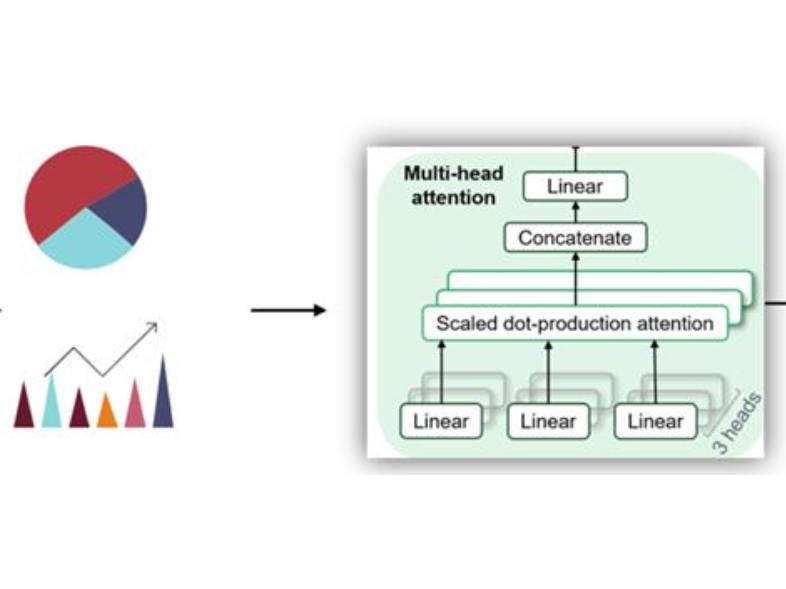

Predictive Repair Management Using Multi-Head Attention Transformer and Online Learning

ASME IDETC-CIE · 2025

Repair time prediction in maintenance shops affects resource allocation and customer expectations, yet categorical and numerical records are often handled by traditional regression. A multi-head attention transformer embeds categorical and numerical features jointly for tabular repair data, and an online learning loop updates the model as new repairs arrive. On a decade of real-world fleet repair records, the framework reaches 77.68% overall accuracy and surpasses a feed-forward baseline.

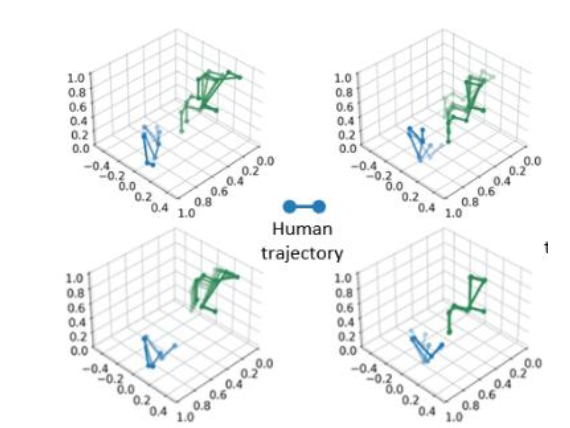

Multi-Task Learning for Intention and Trajectory Prediction in Human–Robot Collaborative Disassembly Tasks

Journal of Computing and Information Science in Engineering · 2025

Safe human-robot collaboration depends on prediction of both human intention and motion trajectory, yet these two tasks are usually trained in isolation. We propose a multi-task learning framework that shares an autoencoder's latent representation across two heads: an SVM classifies intent, and a decoder reconstructs future joint trajectories. A case study on an HRC disassembly task confirms that one shared model can deliver both predictions.

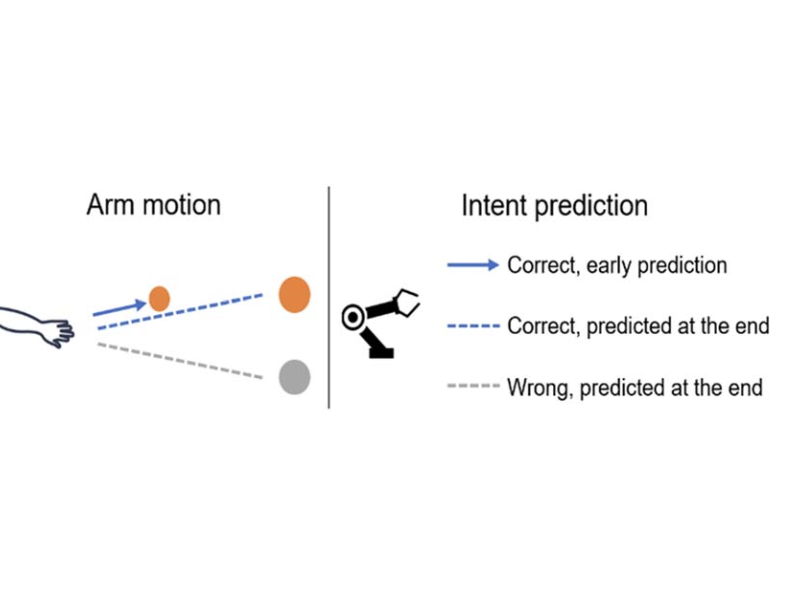

Early Prediction of Human Intention for Human–Robot Collaboration Using Transformer Network

Journal of Computing and Information Science in Engineering · 2024

In human-robot collaboration, robots typically respond only after a reaching motion completes, which limits proactive action. A Hidden Markov Model first locates the moment when human intent shifts from uncertain to certain; a Transformer and a Bi-LSTM then classify intent from the motion before this moment. Accuracy improves by 2% for the Transformer and 6% for the Bi-LSTM over full-trajectory input, and the robot gains earlier lead time to react.

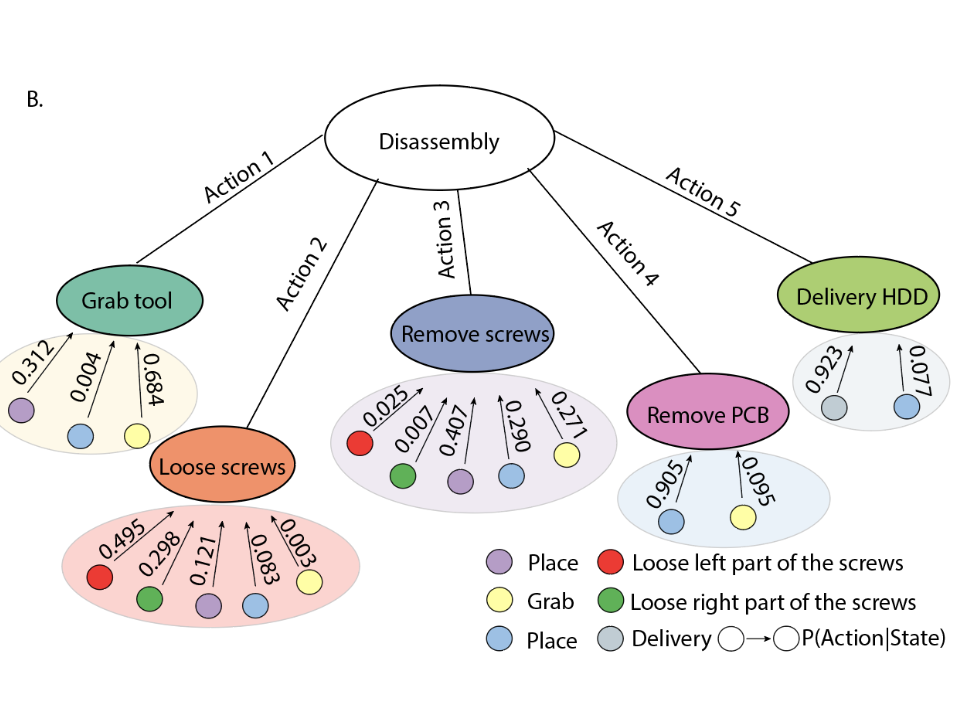

Unsupervised Human Activity Recognition Learning for Disassembly Tasks

IEEE Transactions on Industrial Informatics · 2023

Collecting labeled disassembly video is costly, and every new e-waste product requires fresh annotations. We pair a sequential VAE with an HMM to segment and classify teardown actions without labels, using hard-drive disassembly as a testbed. The framework reaches 91.52% recognition accuracy and remains stable under variations in screw number and position, outperforming PCA and autoencoder baselines.

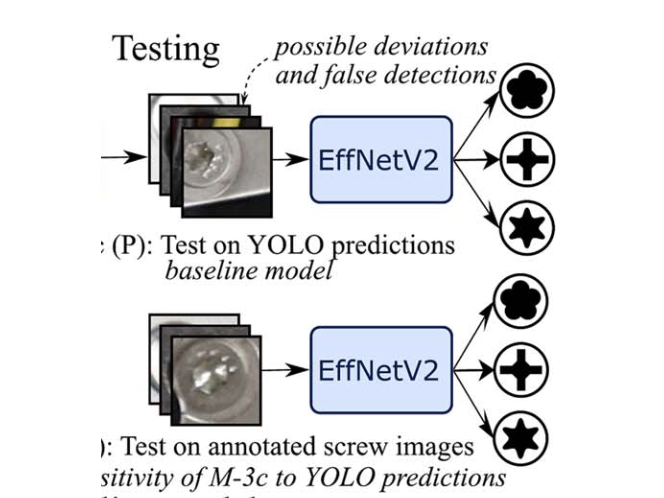

Automatic Screw Detection and Tool Recommendation System for Robotic Disassembly

Journal of Manufacturing Science and Engineering · 2022

End-of-life electronics must be disassembled before parts can be reused, and the bottleneck is the many small, varied screws involved. YOLOv4 localizes each screw and EfficientNetV2 classifies its shape; the combined output maps to the matching screwdriver. The optimized detector reaches 0.93 recall and 0.92 F1 on three screw types tested in EOL devices, and the robot receives both a screw location and a tool choice in one pass.